Mathematical Models of Cognitive Learning: Decoding the Mind with Equations

At the heart of every human advancement lies a profound question: How do we learn? This inquiry, once the domain of philosophers and educators, is now being rigorously explored using the language of mathematics. As cognitive science, neuroscience, and artificial intelligence evolve, mathematical models are emerging as indispensable tools for understanding how knowledge is acquired, retained, and applied—not only by humans but increasingly by intelligent machines.

Far from reducing the richness of cognition to mere numbers, these models aim to capture the underlying patterns and principles that govern learning. Whether through probabilistic reasoning, dynamic systems, or optimization frameworks, mathematics offers a way to move from descriptive theories of the mind to precise, predictive frameworks.

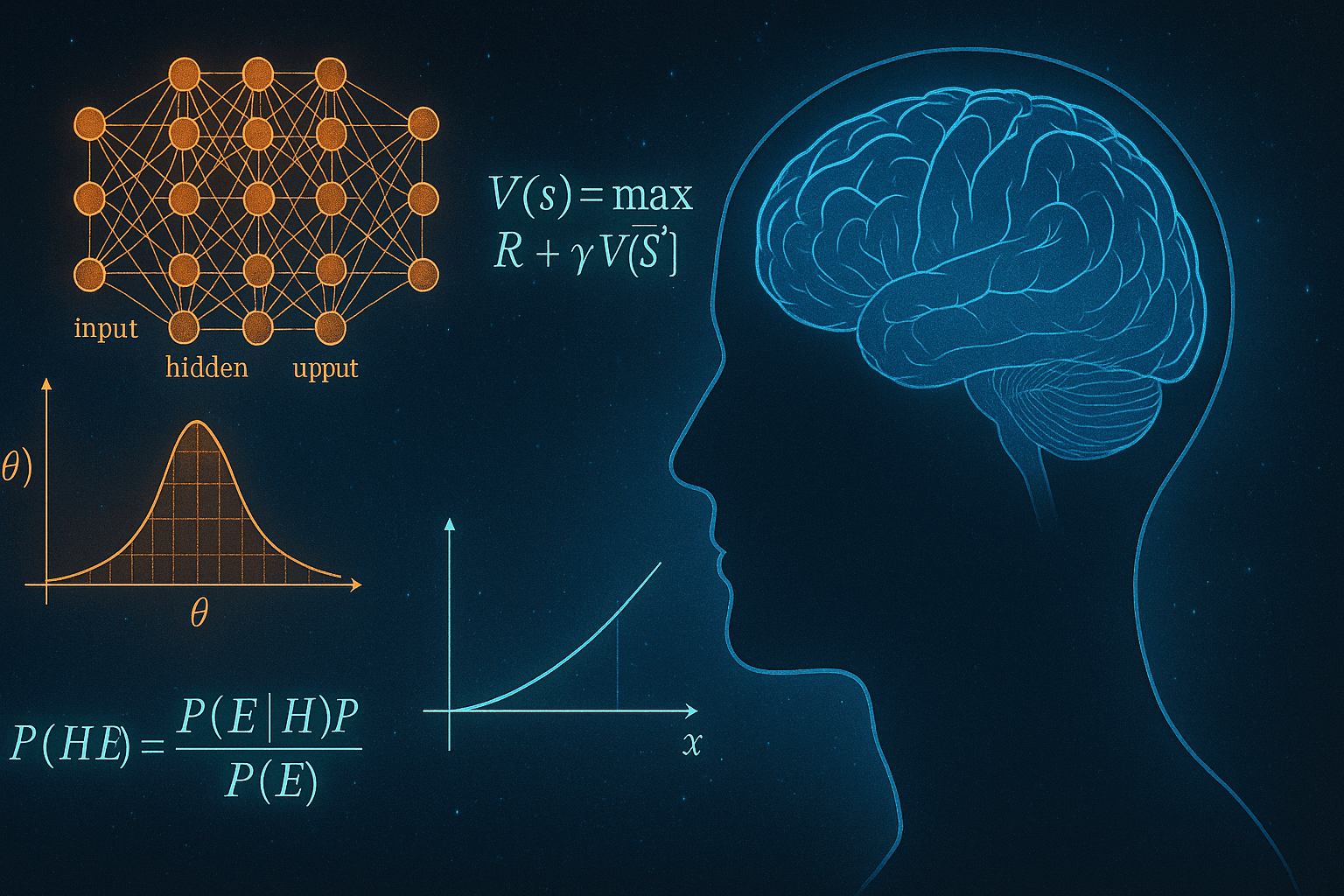

Bayesian Learning: Probability as Cognition

One of the most influential paradigms in modern cognitive modeling is Bayesian learning. At its core, this framework is rooted in Bayes’ Theorem, a foundational concept in probability theory. It posits that learners maintain a set of beliefs about the world and continuously update them as new evidence becomes available.

Imagine a student learning to distinguish between different bird species. With each new bird observed, the brain evaluates whether the features match existing categories or suggest a new one. Mathematically, this process resembles posterior updating, where prior beliefs are modified by likelihoods derived from observations.

Bayesian models elegantly account for uncertainty, prior experience, and the probabilistic nature of perception. They are widely used not only in cognitive psychology but also in adaptive learning technologies, where educational systems dynamically adjust to a learner’s proficiency in real time.

Dynamical Systems and the Time Course of Learning

Another powerful class of models involves dynamical systems, particularly differential equations that describe how knowledge evolves over time. These models often quantify learning as a rate of change—capturing how quickly new information is absorbed and how rapidly it decays.

For instance, the Ebbinghaus forgetting curve, which describes how memory fades with time, can be modeled with exponential decay functions. Extensions of this model incorporate spaced repetition, predicting optimal intervals for reviewing material to maximize long-term retention.

These continuous-time models are particularly valuable in neuroscience, where learning is often represented as the adjustment of synaptic weights—governed by temporal dynamics that can be described with mathematical precision.

Reinforcement Learning: Learning Through Reward

Originally rooted in behavioral psychology, reinforcement learning (RL) has evolved into a sophisticated mathematical framework central to both cognitive science and artificial intelligence. Here, learning is modeled as the optimization of actions based on rewards and penalties.

In humans, RL frameworks align with how we form habits or make decisions—evaluating outcomes and adjusting behavior to maximize long-term benefit. In AI, these same principles enable systems to play games, control robots, or drive cars with impressive proficiency.

Mathematically, RL often involves Markov decision processes (MDPs) and Bellman equations, which describe how the value of an action depends recursively on future rewards. These tools provide not only a theory of decision-making but also a bridge between cognitive psychology and machine learning.

From Classroom to Cortex: Applications and Implications

These mathematical models are not just theoretical. They underpin real-world applications across education, AI, and neuroscience:

Personalized learning platforms (like intelligent tutoring systems) use Bayesian and RL models to tailor instruction to a student’s unique learning curve.

Cognitive architectures in robotics integrate probabilistic models and decision-theoretic learning to replicate human adaptability in machines.

In brain imaging studies, models of learning are used to interpret how different regions encode prediction errors, memory decay, or learning rates.

By making learning quantifiable, these models allow us to design more effective educational strategies, build more human-like AI, and probe the very architecture of cognition.

Toward a Unified Science of Learning

Ultimately, mathematical models of learning are more than computational tools—they represent a conceptual shift in how we understand the mind. Just as Newton’s laws brought order to the chaos of motion, and Maxwell’s equations unified electricity and magnetism, these models promise to reveal deep principles governing thought and adaptation.

As a mathematician, I find it profoundly exciting that learning itself—one of the most abstract and dynamic human experiences—can be expressed in precise, elegant equations. By continuing to refine these models, we are not only advancing science and technology but also gaining insight into what it means to think, to grow, and to know.