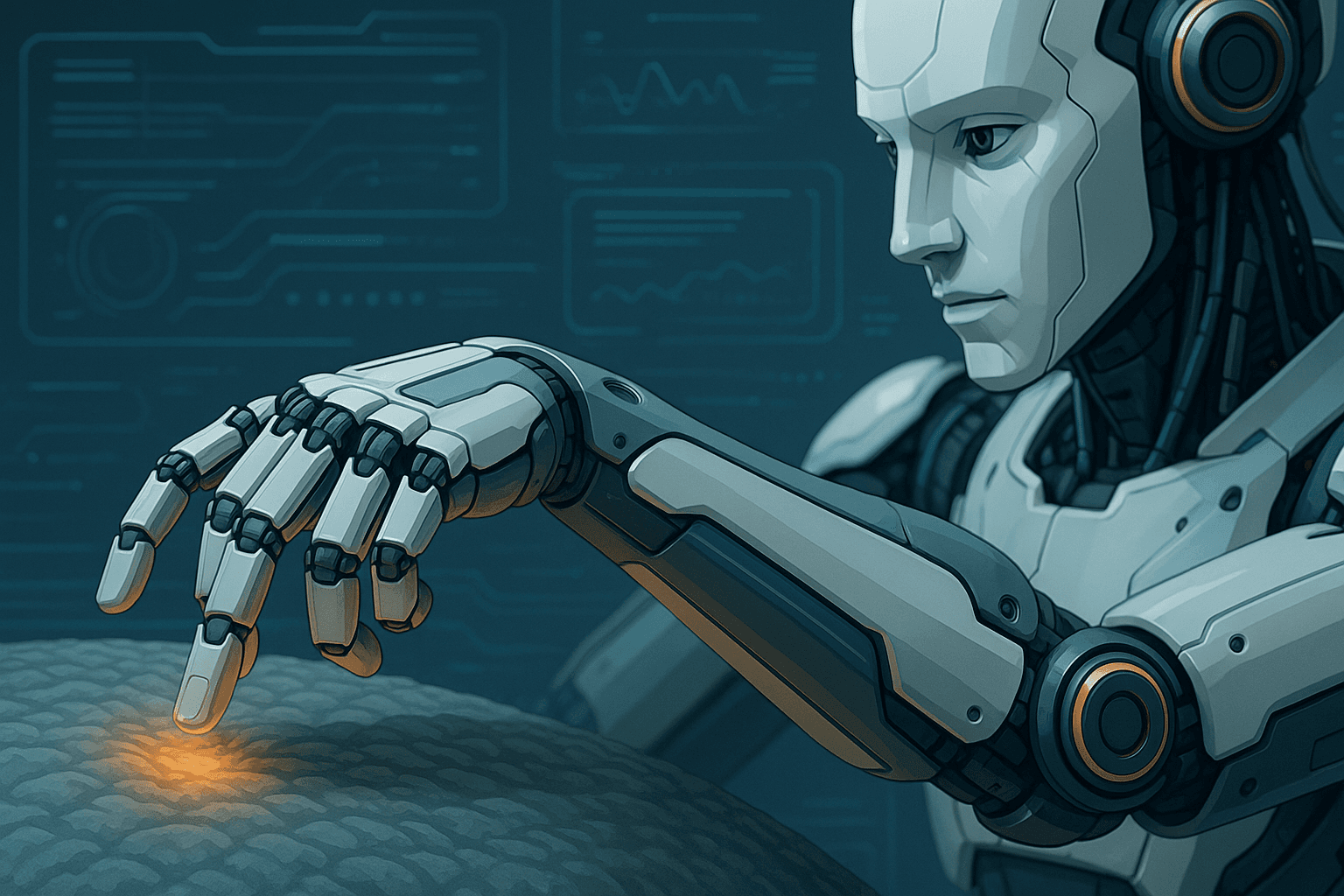

Tactile AI: Giving Robots a Sense of Touch

We take it for granted when we feel the smoothness of glass or the pressure of a handshake. For robots, though, touch has long been elusive — until now. Thanks to advances in tactile AI, machines are gaining the ability to feel, interpret, and respond to the physical world with human-like sensitivity.

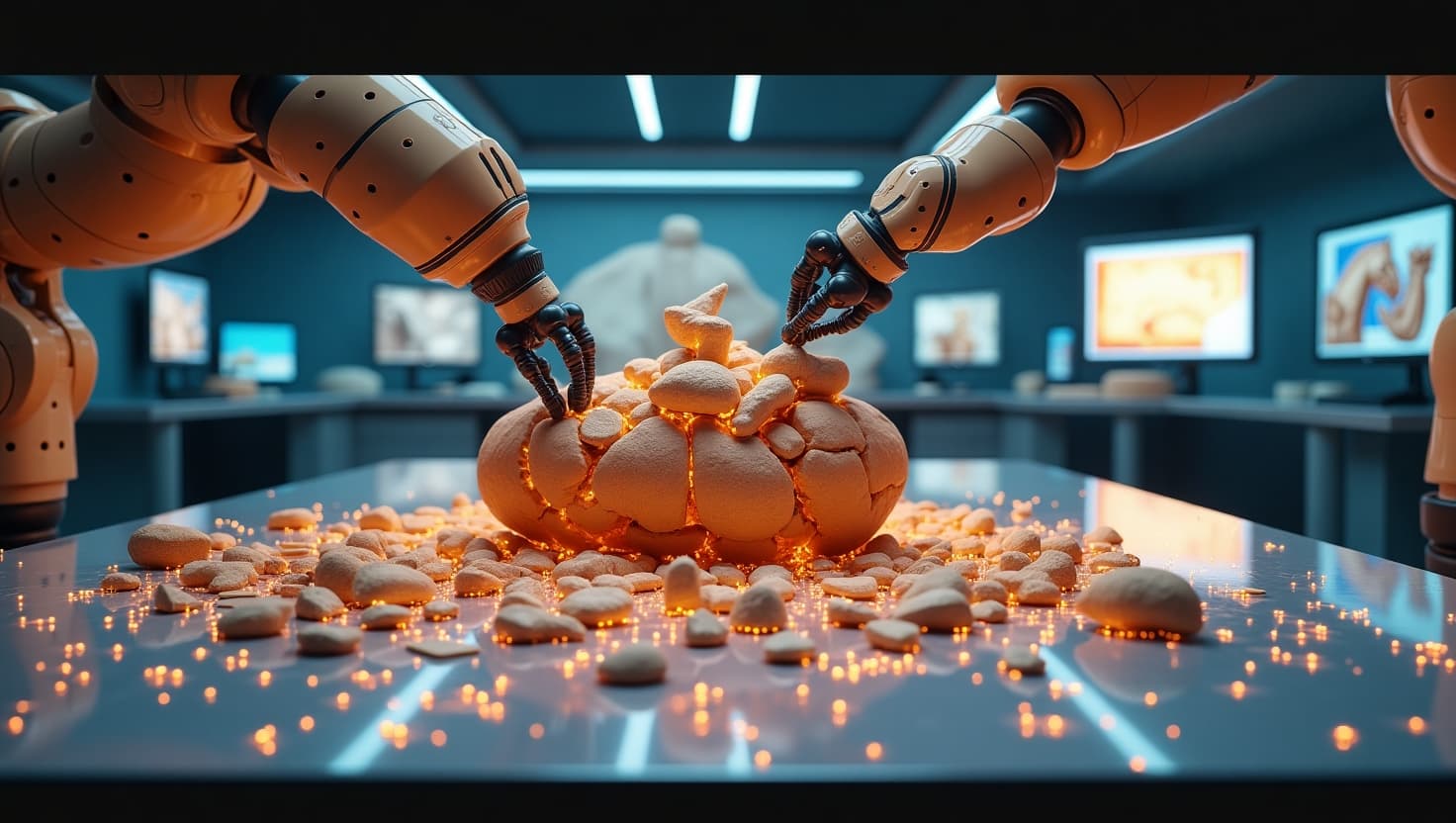

Tactile AI combines touch sensors with machine learning, enabling robots to detect texture, shape, pressure, and even temperature. These sensors can be embedded in robotic fingers, skins, or grippers, creating feedback loops that mimic our own sense of touch.

With this capability, a robot can determine whether it’s holding a soft sponge or a fragile egg — and adjust its grip accordingly. This is crucial for delicate tasks in manufacturing, agriculture, and medicine, where traditional rigid robots often fall short.

One breakthrough example is GelSight, a sensor that captures high-resolution tactile images when pressed against a surface. Coupled with AI, it enables a robot to “see” what it’s touching — like reading Braille with digital fingers.

Tactile feedback also enhances safety. A robot that senses unexpected resistance can stop immediately, reducing the risk of injury in shared human-robot environments.

In prosthetics, tactile AI is helping create limbs that restore a sense of touch to amputees, allowing them to feel what they hold — a profound leap for quality of life.

Challenges remain in integrating touch with other sensory inputs and making sensors durable enough for real-world use. But as robots gain touch, they lose clumsiness — and gain the subtlety and intuition needed to work seamlessly alongside us.

The future isn’t just hands-on — it’s hands-feeling.