Emotion Recognition in Human-Robot Interaction

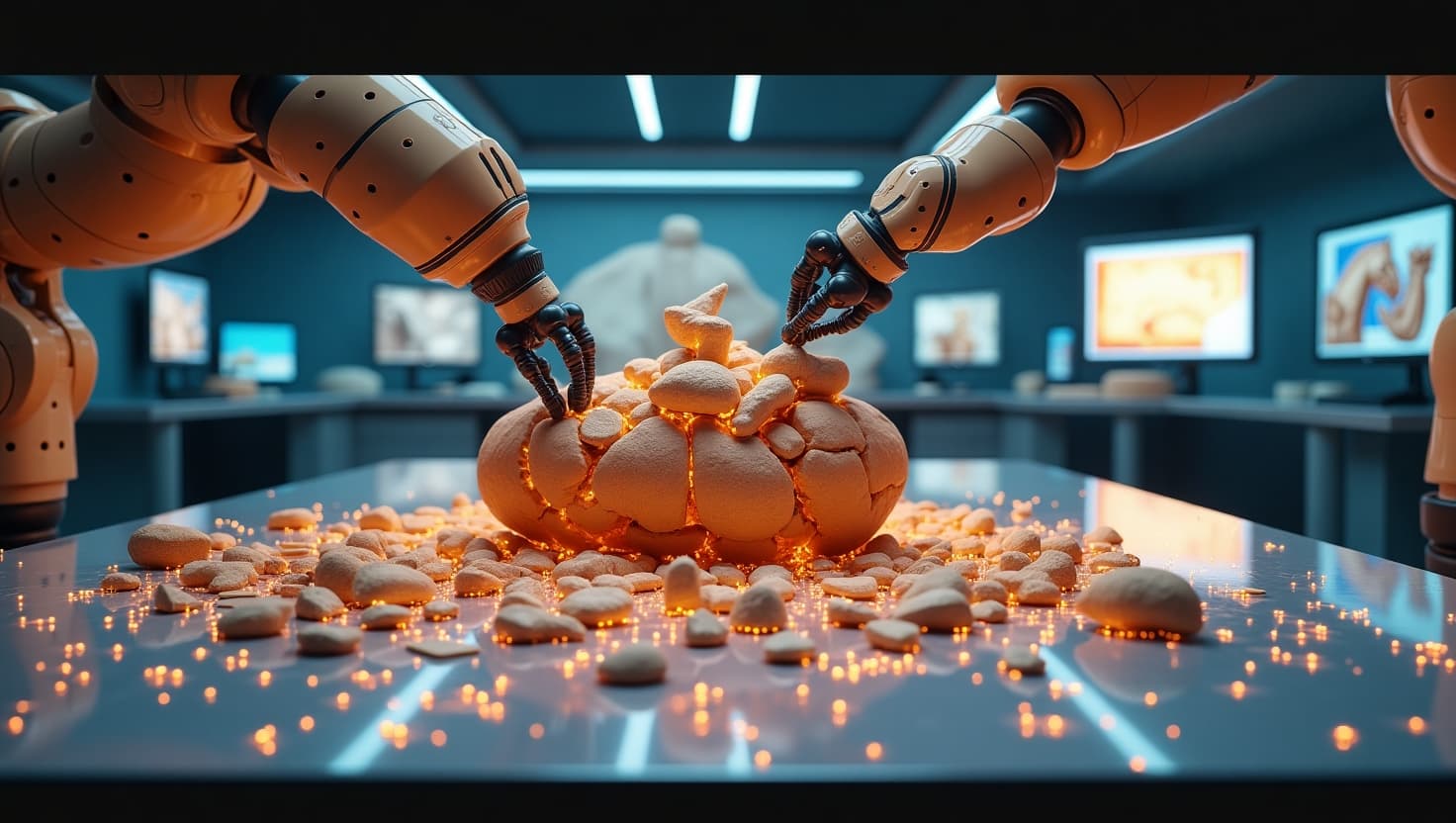

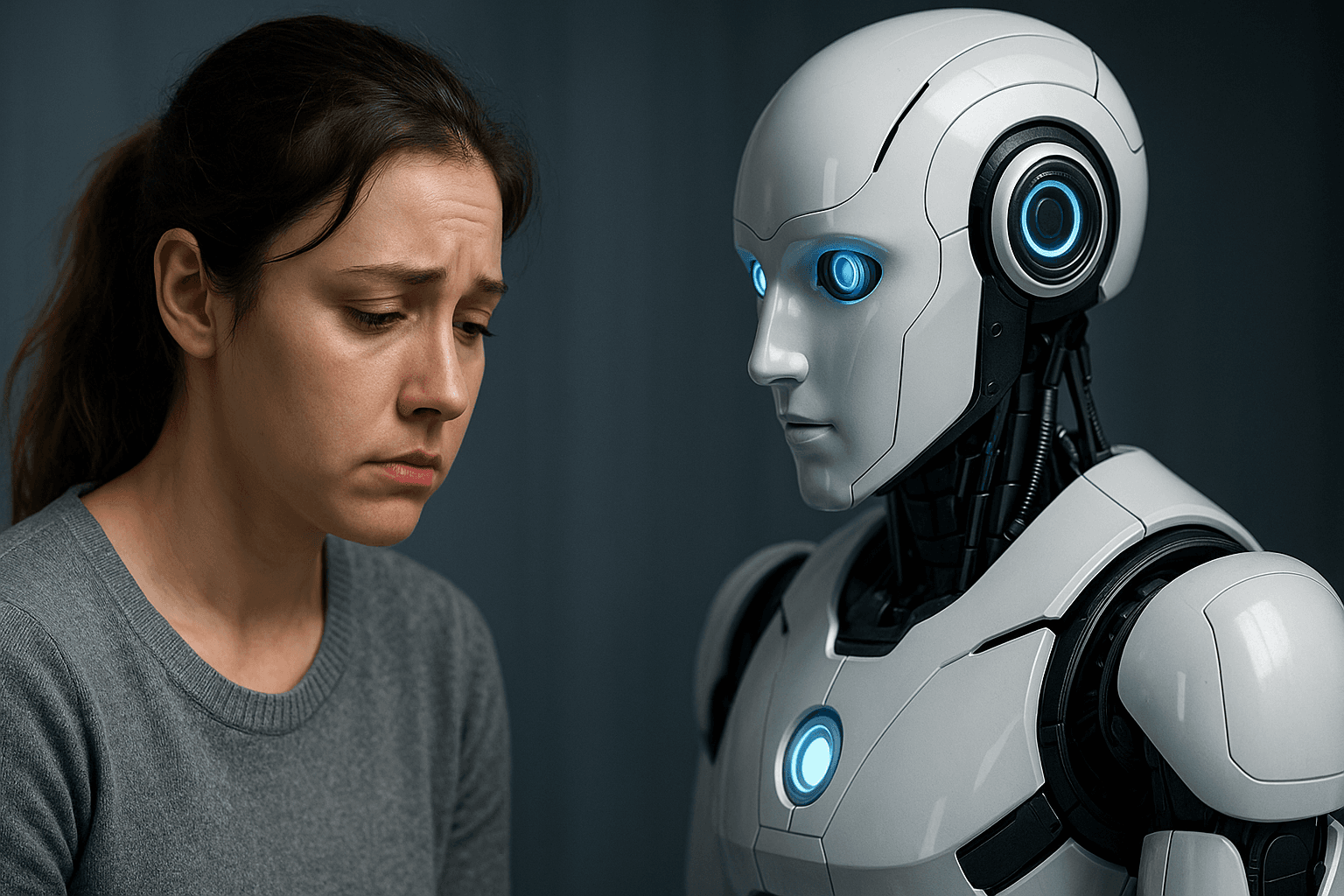

Can a robot tell when you’re sad, stressed, or delighted? Emotion recognition is becoming a critical frontier in human-robot interaction, aiming to make machines more empathetic, responsive, and socially intelligent.

Traditionally, robots followed commands and responded to input — but they couldn’t “read the room.” Now, with the help of AI, facial analysis, voice tone detection, and physiological sensors, robots are beginning to interpret human emotions in real time.

Facial recognition software can detect micro-expressions, while audio algorithms analyze speech for emotional cues like pitch, pace, and intonation. Some systems even track heart rate or skin conductivity using wearable tech to monitor stress or excitement.

In customer service, this means a robot receptionist could sense frustration and offer help before you're forced to ask. In elder care, a social robot could detect signs of loneliness or agitation and respond with comforting dialogue — or alert a caregiver.

Emotion-aware robots are also making strides in education, adapting their teaching style based on student engagement or confusion. In therapy and autism support, emotionally intelligent robots are helping users practice social skills in safe, structured settings.

But challenges loom. Emotion is deeply cultural and personal — what signals joy in one person might not in another. Misinterpretation could lead to awkward or even harmful outcomes. Privacy is also a major concern when emotional data is collected and analyzed.

Still, the trajectory is promising. As robots become more adept at reading our emotions, they become more than tools — they become companions, capable of understanding not just what we say, but how we feel.