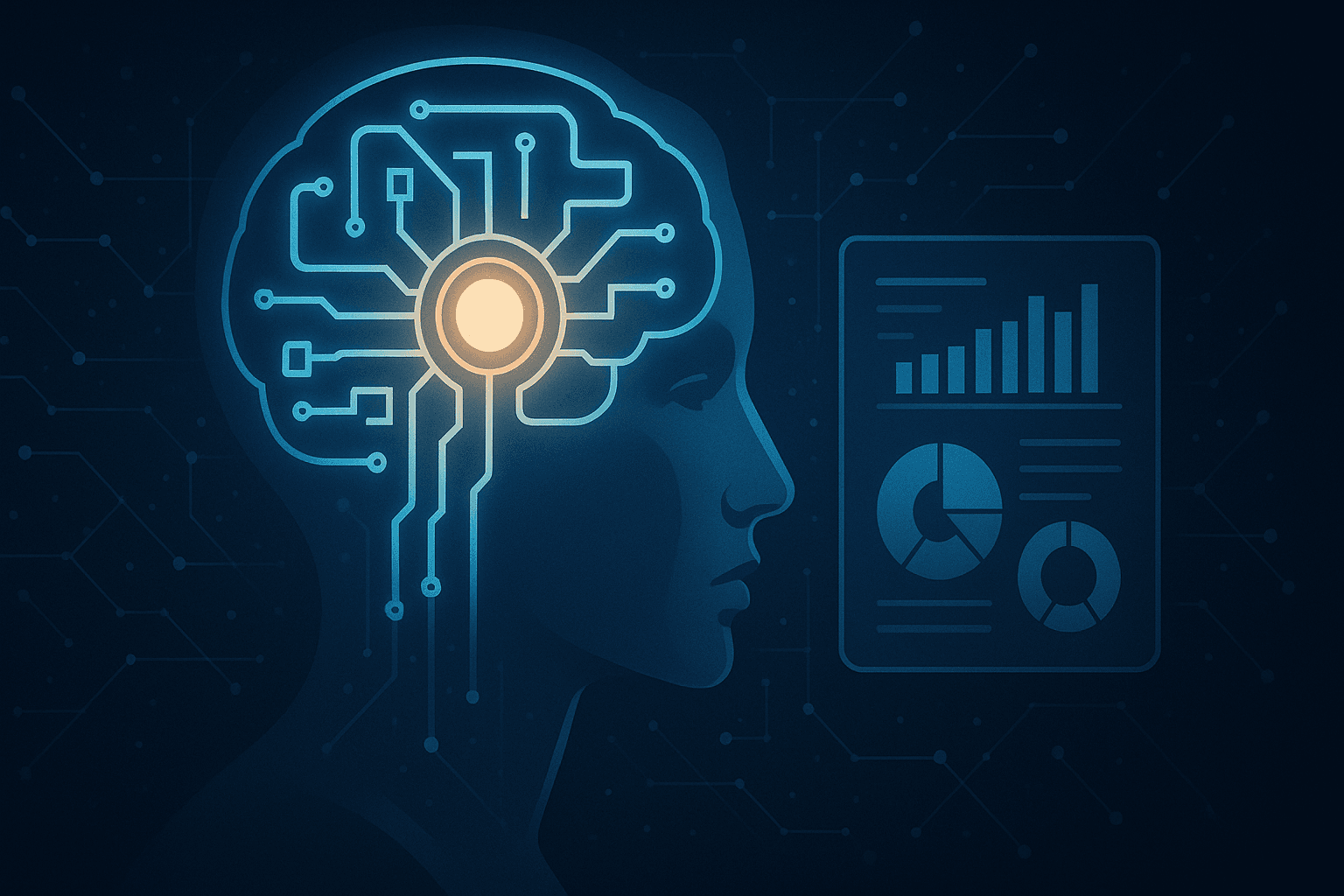

Explainable AI in Critical Systems

AI is rapidly moving into critical roles — in healthcare, finance, and even criminal justice. But when lives and liberties are on the line, one burning question arises: Can we trust the algorithm? Enter Explainable AI (XAI) — a growing field focused on making machine decisions transparent and understandable to humans.

Traditional machine learning models, especially deep neural networks, are often black boxes. They may output a diagnosis or a credit score, but can’t easily explain why. That’s a problem when patients need second opinions or regulators demand accountability.

XAI aims to open the box. It provides insights into what features influenced a decision, how confident the model was, and what alternatives it considered. Tools like SHAP, LIME, and saliency maps are used to dissect decisions and create human-readable justifications.

In critical systems, this transparency builds trust. A self-driving car must not only brake in time, but explain whether it detected a pedestrian, a shadow, or a plastic bag. In finance, auditors must understand how an AI flagged a transaction as fraudulent — or failed to.

Governments and institutions are catching on. The EU’s AI Act mandates transparency for high-risk applications. Meanwhile, AI used in courtrooms or hiring systems is under increasing scrutiny for bias and opacity.

Yet, a trade-off exists. More explainability can mean less accuracy, especially with simpler models. The goal isn’t to make AI perfectly interpretable, but sufficiently accountable — especially when used in decisions that affect human lives.

In the end, Explainable AI is about ethics, trust, and empowerment. It reminds us that intelligence isn’t just about answers — it’s about understanding how we get them.